Sensemitter is now Emhance.

Read more

Articles

Mobile Game Playtesting: The Complete Guide for Growth and Product Teams

Why mobile game playtesting fails most teams

You ran the playtest. Everyone watched some footage. The research deck landed in a Confluence doc. Three sprints later, D1 retention is the same.

This is not a playtesting problem. It is a diagnosis problem.

Most mobile game playtesting produces observation — what players clicked, where they hesitated, what they said when asked. What it rarely produces is the actual cause: the exact moment in the session where engagement collapsed, before the player consciously registered it, before the drop appeared in your retention curve.

That gap between observation and diagnosis is where D1 targets are missed quarter after quarter. Studios cycle through individual UI changes, iterate on pop-up copy, A/B test button colours — while the structural issue driving churn stays invisible.

There is a second failure mode that compounds this one: teams that treat playtesting as a milestone event rather than an ongoing research capability. A playtest commissioned two weeks before soft launch, when the build is nearly final and the team is in crunch, is producing findings at the most expensive possible time. Changes are costly, sprint cycles are closed, and the release date creates pressure to ship regardless of what the data shows. The studios that move the fastest on retention don't run playtests at milestones. They run narrow, focused studies earlier — when a finding about the tutorial structure costs one sprint to fix, not a delayed launch.

One mobile RPG studio spent several sprints iterating on individual tutorial pop-up screens while D1 retention didn't move. Emhance's engagement data showed boredom states appearing at every instruction screen — the problem was sequence design, not any individual element. Reducing 12 pop-ups to 6 and restructuring to just-in-time teaching delivered tutorial completion +12% and D1 retention +4–6 percentage points. The full diagnostic is in the Mobile Game Onboarding Playtesting guide.

The team would have kept iterating on the wrong thing indefinitely.

What mobile game playtesting actually is (and what it isn't)

Mobile game playtesting is a structured research method in which real players engage with your game under defined conditions, while their behaviour and responses are recorded and analysed. At its best, a playtest answers a specific hypothesis: "Is the onboarding sequence creating enough investment before the mechanic tutorial?" or "Does the pacing of levels 3–5 hold engagement, or is something breaking before D7?"

What playtesting is not:

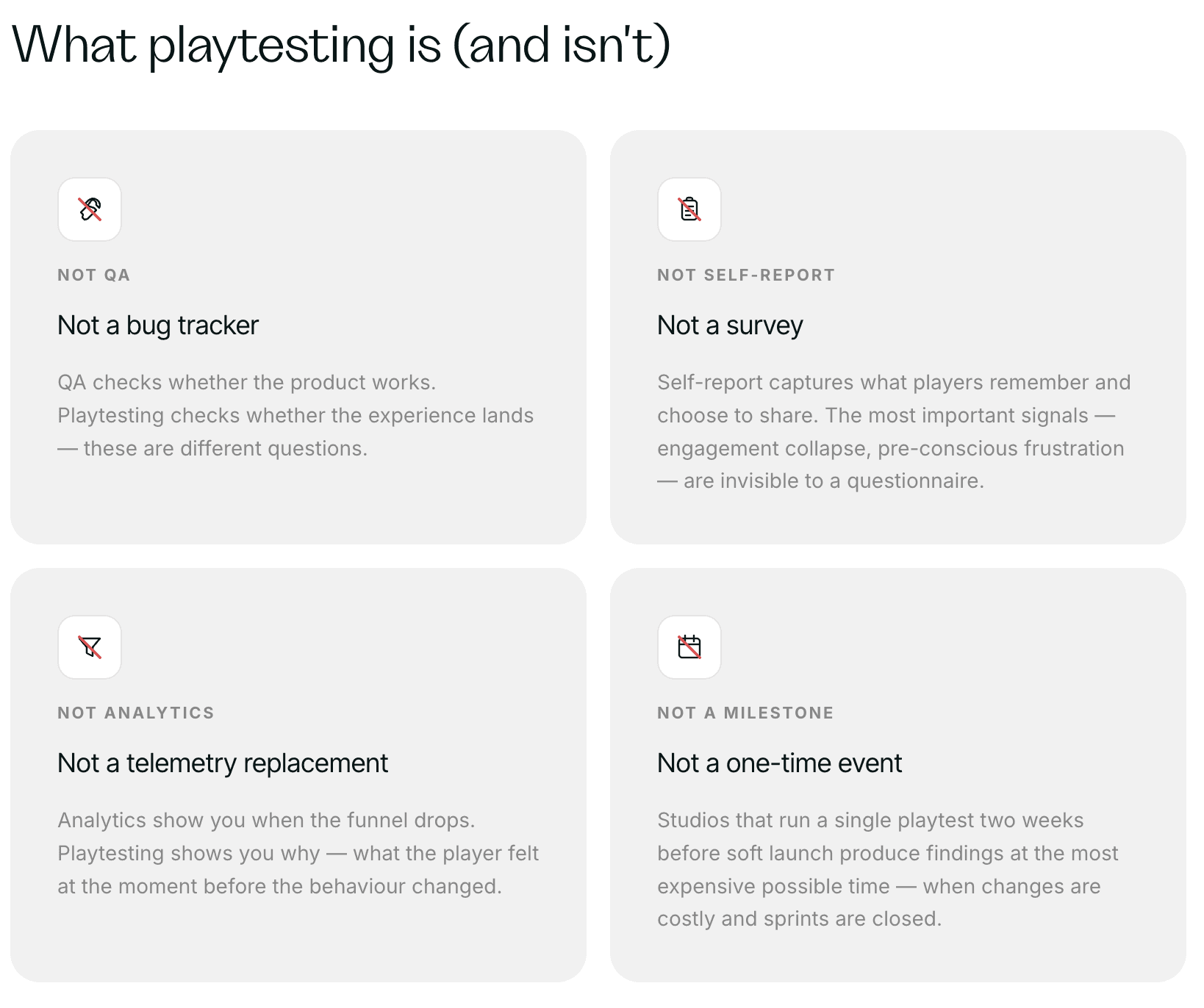

Not just QA. Playtesting is not bug-finding (or at least not completely about bug-finding!). QA checks whether the product works. Playtesting checks whether the experience lands.

Not a telemetry replacement. Your analytics show you when the funnel drops. Emotioni-intelligent playtesting shows you why it drops — what the player felt at the moment before the behaviour changed.

Not a survey. Self-report captures what players remember and choose to share. The most important signals — the moment engagement collapsed, the specific mechanic that created frustration before the player consciously registered it — are invisible to a questionnaire.

Not a one-time event. Studios that treat playtesting as a quarterly research ceremony miss the window where findings are most valuable: before the sprint closes, not after launch.

For a fuller comparison of playtesting versus broader user research approaches — and a practical framework for deciding which to run — see Mobile Game User Research vs. Playtesting.

The observation vs. diagnosis gap: why standard playtests leave you stuck

Standard unmoderated playtesting — the industry default — gives you footage, maybe a transcript, and (at best) a set of AI-generated tags. Skilled researchers synthesise these into findings. The findings go into a report. The report says: "Players seemed confused at the prestige unlock screen."

Now what? Which element on the screen? What kind of confusion? Was it friction (the UI isn't clear), or was it overwhelm (the player doesn't have enough context yet)? If it's friction, you update the UI. If it's overwhelm, you remove the screen from the FTUE entirely. These are different fixes with different development costs.

The diagnosis requires knowing what players felt at that moment — not what they said about it afterwards, and not what the video appeared to show.

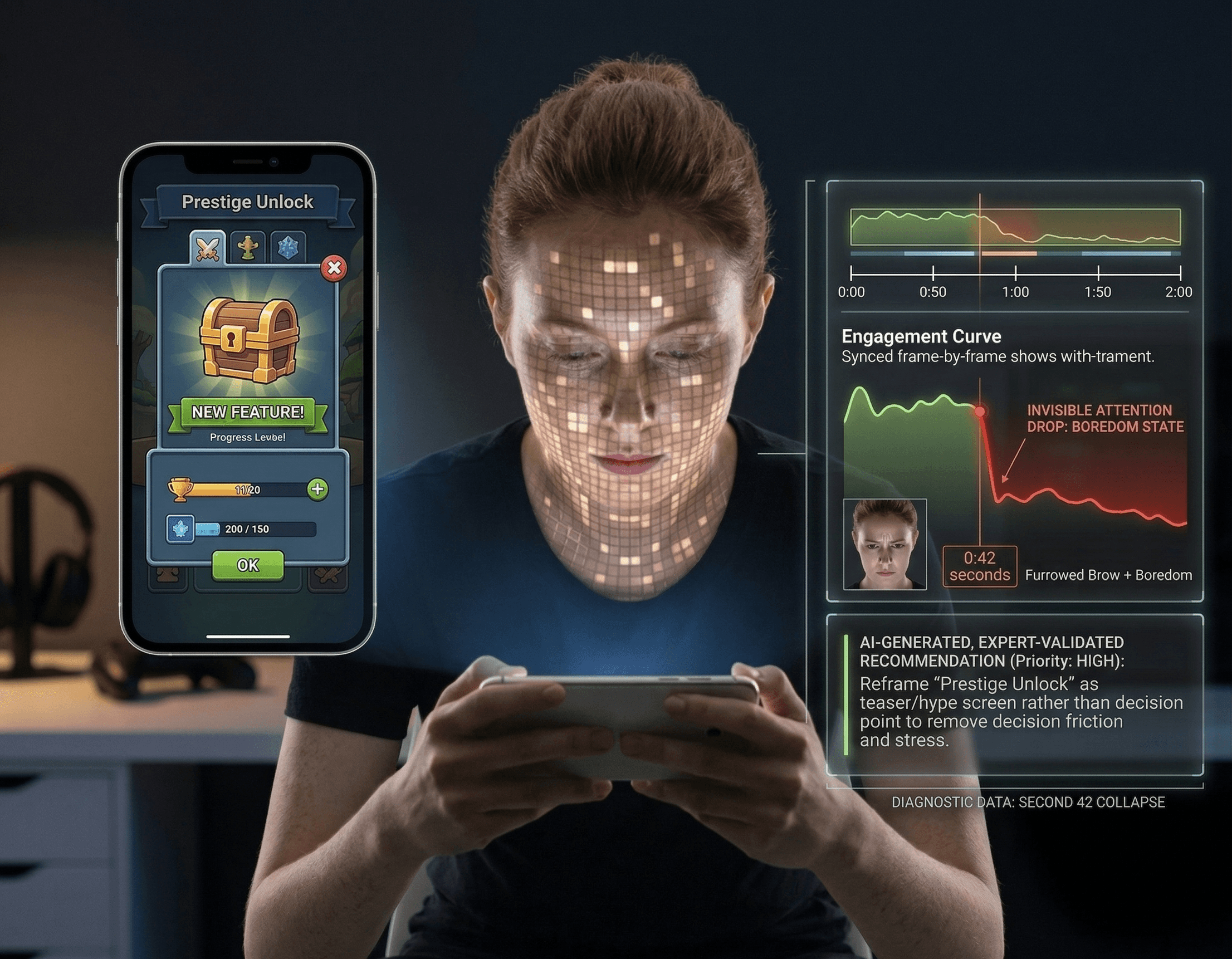

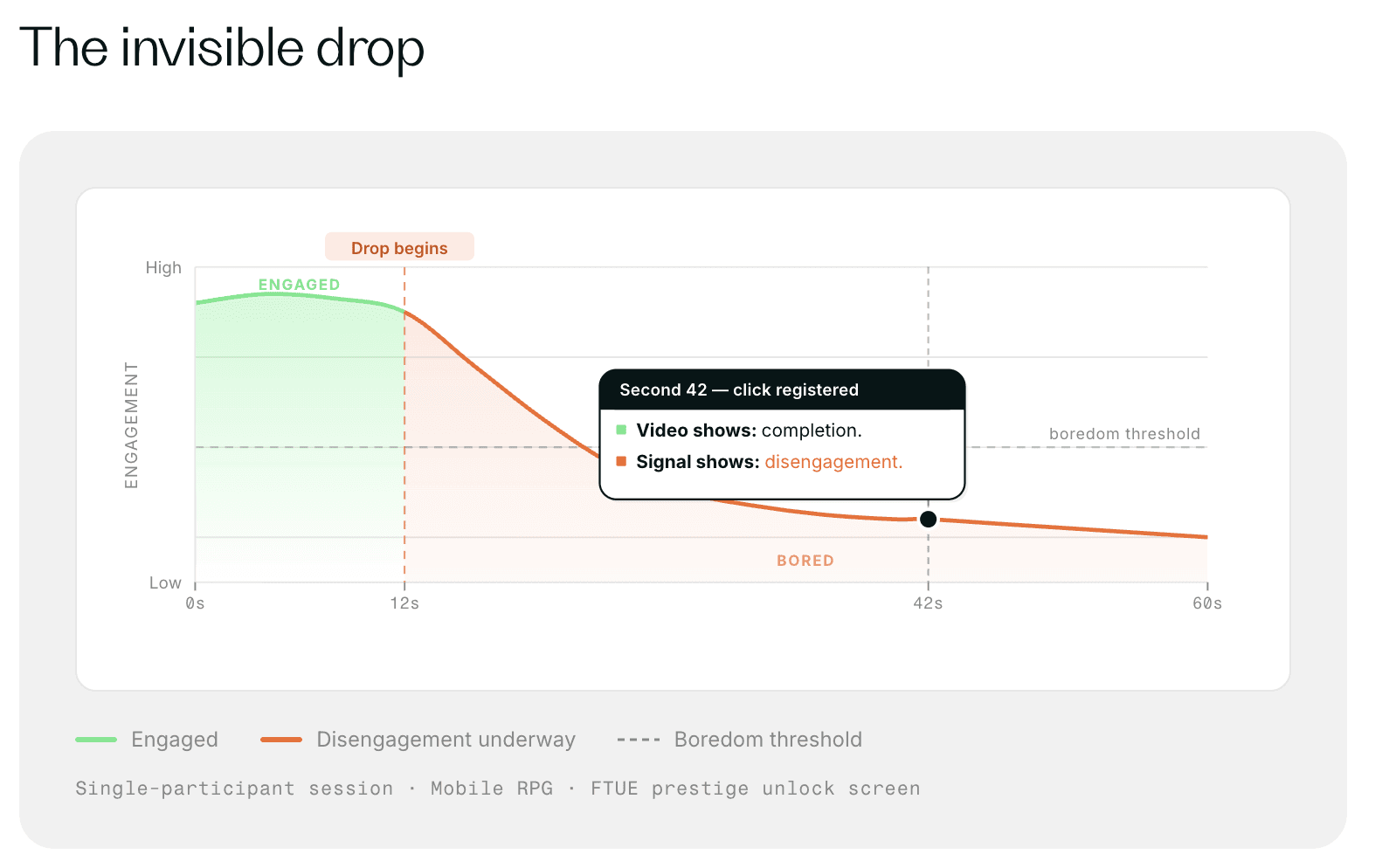

One studio found an attention drop at second 42 of their FTUE — specifically at a prestige unlock screen. Players were clicking through, so the video showed "completion." But engagement had already collapsed 30 seconds before the click. The screen was intended to create excitement about future game depth; it was landing as stress, not delight. New players had no context for the item being shown and felt they were being evaluated on a decision they weren't equipped to make. The fix was reframing the screen as a teaser rather than an instruction — a small change with a measurable D1 retention improvement, shipped within the same sprint.

Without second-by-second engagement data, this moment is invisible. The video shows a click. The transcript might show mild confusion. Only the involuntary signal shows the attention drop and the emotional state driving it.

The Emhance approach to mobile game playtesting

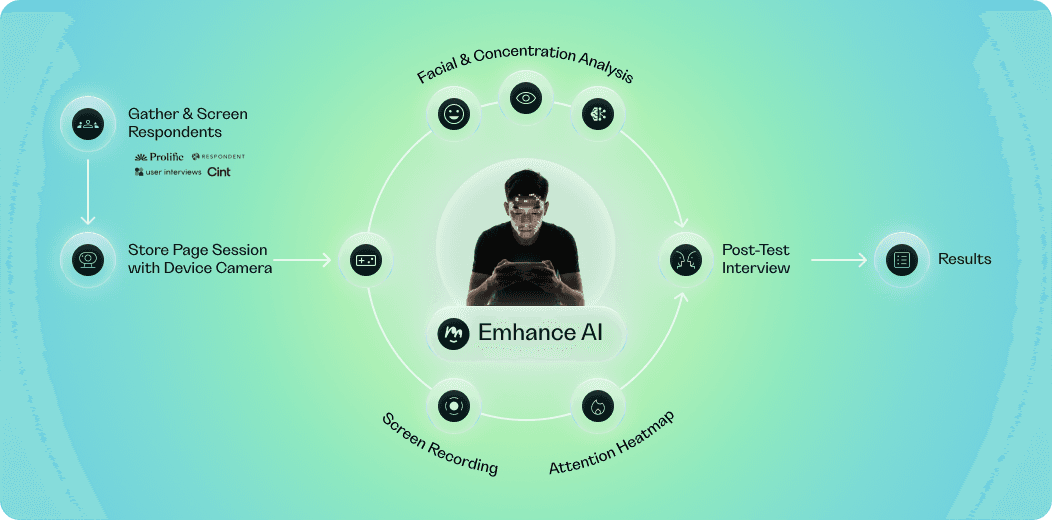

Emhance is an emotion intelligence research suite built specifically for mobile game studios. It combines AI-powered biometric analysis — reading facial expressions and eye movement from standard webcams — with an expert research methodology to produce a second-by-second engagement timeline across every session.

The methodology does not require specialist hardware. Participants play on their own devices, in their own environment, through a standard browser session. They do not wear sensors or visit a lab. This matters for research validity: a player on their phone at home is not the same as a player wearing electrode caps in a controlled room.

How a study works:

Research design. Emhance's research team works with you to define the hypothesis, study type, session length, and participant profile. The question being answered should be specific: not "is the FTUE working" but "does the tutorial mechanic sequence create enough player agency before the instruction load peaks?"

Participant recruitment . Participants are sourced from multiple respondent pools and validated against your audience criteria — genre experience, device type, geography, demographics. Niche audiences (specific genres, experience levels) can be sourced.

Sessions (unmoderated). Players receive a testing link, enable their webcam (with consent), and play naturally. No guidance. No moderation during gameplay. The absence of a facilitator is the point: involuntary signals captured without a social performance layer.

Neural net processing. Facial recordings are processed through two proprietary pipelines: an Emotion Neural Net (classifying emotional intensity per second from facial microexpressions, with cultural and physiological bias correction applied) and an Attention Neural Net (tracking gaze and blink suppression as a concentration signal). The system processes 421+ biometric data points per second, per participant, at ~91.67% accuracy on the AdFes benchmark.

Engagement/boredom classification. Raw signal is not the product. Emhance's proprietary methodology — built on 300+ real-world game studies — classifies second-by-second player state as engaged or bored, with noise removal, normalisation, and contextual smoothing applied.

Findings and recommendations. Delivered in the Emhance platform: engagement curves, face + screen recording synced to the engagement timeline, and post-play interview summaries, and AI-generated, expert-validated recommendations prioritised by engagement impact.

The result is not a report that says "players seemed disengaged in section 3." It is a specific finding: attention collapsed at second 42, here is the UI element responsible, here is the recommended fix, here is its priority relative to the other findings.

Use cases: where mobile game playtesting creates the most value

FTUE and onboarding

The first-time user experience is the highest-leverage window for playtesting. 97% of mobile game users churn by Day 30. The decisions players make in the first 15–30 minutes of a session determine whether they return the next day.

FTUE playtests with Emhance identify the exact moments where engagement drops during onboarding — before the drop shows up in your D1 metrics. They surface friction before the visible behaviour change (players complete a step but have already mentally disengaged) and identify whether the problem is individual UI, mechanic complexity, or sequence design.

Recommended for: D1 below target; new title preparing for soft launch; redesigned onboarding that needs pre-launch validation.

→ See: Mobile Game Onboarding Playtesting

Retention and core loop testing

When D7–D30 drop-off is unexpected and analytics can't explain the cause, a playtest on core loop and progression identifies where engagement is bleeding out. Emhance's level-based and event-based analysis maps engagement across gameplay segments — so teams see whether pacing is the problem, whether a specific mechanic is draining investment, or whether the reward cadence is creating boredom states at the wrong points in the session.

→ See: Mobile Game Playtesting for Retention

Creative and UA testing

CTR tells you what got clicked. It does not tell you what created install intent. Emhance studies on creative variants measure emotional engagement and attention across the intro, middle, and end sections — segmented by creative type, character presence, and narrative structure.

Studies of 47 creative variants across one studio found character-driven narratives outperformed tutorial-heavy content by 25–30% on emotional engagement. Tutorial-heavy content underperformed by 40% on attention and amusement. Some of those tutorial creatives had acceptable CTR. None were producing the emotional investment that drives genuine install intent.

The finding changed the portfolio structure, not just the variant ranking.

ASO testing

App store creative — icons, screenshots, short videos — can be evaluated for emotional response and attention before the store page goes live. Gaze tracking maps what captures attention on the store page. Emotional response data identifies which creative is converting interest into genuine install intent. One studio using ASO testing saw +19% install intent from store page changes.

Competitor benchmarking

Emhance can run studies on competitor games — not just your own. If a competitor retains measurably better on a specific mechanic and your analytics show the gap but not the cause, a competitor study produces the diagnostic that your internal data never could. Several studios in Emhance's pipeline were unaware this was possible.

What teams receive: deliverables from an Emhance study

Every Emhance study delivers a set of findings through the platform:

Engagement curve — Second-by-second emotional intensity per participant and averaged across the group

Engagement / Boredom states — Binary state classification across the session, derived from intensity + concentration

Face scans — Face recording + screen recording synced frame-by-frame to the engagement curve, reviewable per session

Screenplay — Auto-transcribed gameplay narration and interview transcript

Prioritised recommendations — AI-generated, expert-validated action items ranked by engagement impact

Collaborative workspace — Insights can be tagged, highlighted, and discussed across your team

Raw data export — CSV/Excel download for custom analysis

One studio cut 12 hours per playtest from their analysis workflow. Not because they watched footage faster — because the engagement signals surfaced the moments worth watching before anyone opened a raw video file.

Proof: validated results from Emhance studies

These are documented outcomes from real studies, not projections:

Tutorial sequence redesign (mobile RPG): Tutorial completion +12%; D1 retention +4–6 percentage points; D7 retention +4–7 percentage points; tutorial abandonment reduced from 25% to 15–18%

FTUE prestige screen fix: D1 retention improved; fix shipped in the same sprint

Creative testing — 47 variants: Character-driven creatives confirmed as consistent top performers; creative portfolio restructured from retroactive testing to an upstream production framework

Retention study (mobile studio): +3.6 percentage point retention improvement between levels

ASO testing: +19% install intent from store page creative changes

Workflow efficiency: 12 hours saved per playtest from analysis time reduction

Across studies, Emhance produces an average +7% increase in D1 retention.

Why 6 participants is enough (and more isn't always better)

One of the most common objections to playtesting research is sample size. "6 players isn't statistically significant" — and that is correct, if the research goal is measuring how preferences distribute across a player base.

Playtesting for friction is a different kind of research. The question is not "what percentage of players prefer mechanic A vs. mechanic B." The question is "does this experience hold together?" If six independent participants show the same attention collapse at the same moment in your FTUE, you do not need sixty participants to confirm the pattern is real. The usability research literature supports this — friction surfaces consistently within the first 5–8 sessions, with sharply diminishing returns after that. More participants add volume, not diagnostic precision.

For variant comparison studies, Emhance recommends 8–10 participants. For sprint-level FTUE decisions, 6 is sufficient and produces findings that move with the sprint cycle, not against it.

Frequently asked questions

How long does a mobile game playtest take?

An Emhance study delivers findings in 2–3 weeks from kick-off to recommendation delivery. This includes research design, participant recruitment, sessions, neural net processing, and expert analysis. This is 10x faster than traditional lab-based playtesting and competitive with unmoderated video platforms — with significantly more diagnostic depth.

For sample size, cost, study design, and more, see the Mobile Game Playtesting FAQ.

Get started with mobile game playtesting

A 7% improvement in D1 retention has a significant revenue impact for any mid-sized studio. The POC is €1,500. You will have findings within two weeks — specific enough to inform the next sprint.

Book a research design call → — Tell us the question you're trying to answer. We'll scope the right study type, participant profile, and session length. No commitment required.