Sensemitter is now Emhance.

Read more

Articles

Addressing flaws in standard testing methodologies with AI-powered playtesting

Even with clear hypotheses and a structured test plan, many playtests still fail to produce decisions teams feel confident acting on. The result is familiar: mixed feedback, flat retention, and uncertainty about what to change next.

This rarely comes down to effort. It is structural. Traditional playtesting relies heavily on self-reported data, and self-report carries predictable biases that narrow what you can actually learn.

Where traditional playtesting breaks down

1. Recall bias fragments feedback

When feedback is collected after a session, it depends on memory. Memory is selective. Players forget micro-moments that shaped their confidence, understanding, or motivation.

Those “invisible” moments are often what stabilise early retention. If they go unreported, they risk being altered or removed. A well-intentioned iteration can quietly reduce clarity or increase cognitive load.

2. Recency bias skews perceived importance

Players tend to emphasise what they experienced most recently. That does not always correlate with what mattered most.

Late-session mechanics, visual changes, or novelty elements can dominate feedback simply because they are fresh. Meanwhile, core loops or early-session friction that actually shape D1 retention remain under-analysed.

3. Social desirability reshapes responses

Players often describe the version of themselves they prefer to be. Competitive features, rankings, or strategic depth may be endorsed in surveys because they sound aspirational.

Observed behaviour often tells a different story. Especially in casual and hyper-casual genres, escapism and low-friction engagement tend to drive repeat play more than status mechanics.

The pattern is consistent: self-report filters experience through memory, identity, and language. That filtering introduces distortion.

Why emotion-driven AI changes the signal

At Emhance, we approach playtesting differently. We measure what players express in the moment, rather than relying solely on what they later describe.

Our system is built on facial expression analysis. During a session, we apply a stack of pre-trained neural networks that handle:

Face detection

Gaze estimation

Head pose tracking

Emotion classification across seven states: joy, surprise, anger, fear, sadness, disgust, and neutral

This generates raw affective data in real time.

The differentiator is what happens next. We apply proprietary post-processing algorithms to:

Filter noise and false positives

Correct inconsistencies

Aggregate signals into interpretable metrics

Translate outputs into gameplay-relevant insights

The result is structured emotional telemetry that complements behavioural data rather than replacing it.

From raw emotion to actionable metrics

We map emotional signals into three core metrics:

Arousal – emotional intensity

Valence – positive or negative tone

Focus – concentration during interaction

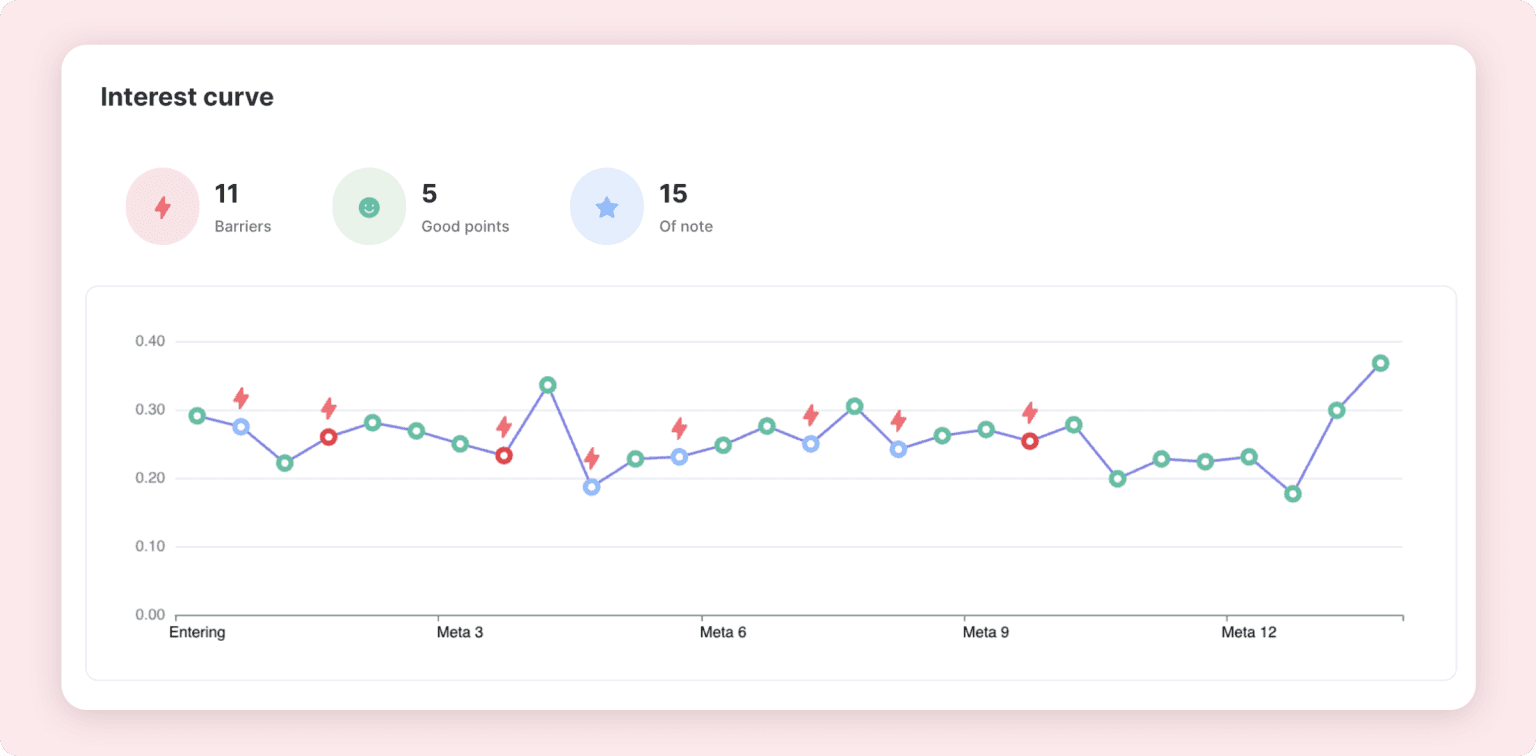

These are visualised across the session as an Interest Curve.

We never interpret these in isolation. For example:

High arousal with negative valence can still indicate engagement. Disgust-driven mechanics in “gross-satisfying” loops often operate this way.

High focus combined with rising arousal can signal productive challenge.

Dropping focus with neutral valence can indicate passive confusion or disengagement.

The value lies in the interaction between signals and how they map onto specific gameplay moments.

Studios receive dashboards that highlight where friction accumulates, where cognitive load spikes, and where emotional engagement collapses. Each flagged moment is paired with practical recommendations.

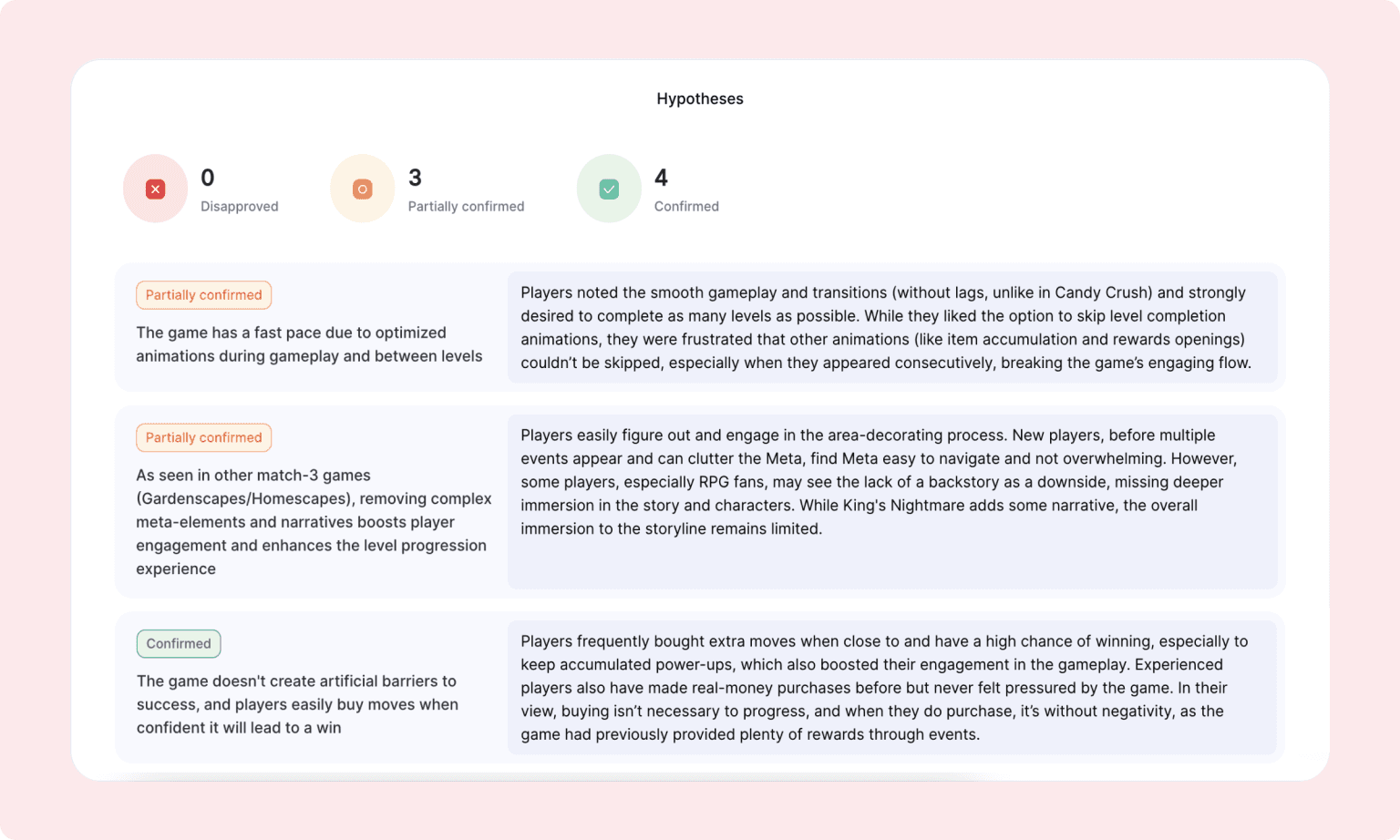

How we run AI-powered playtests

Our qualitative sample size is typically up to 12 participants. This allows fast iteration while keeping cost proportional. Participants are selected against criteria defined with the studio: location, age, gender, genre affinity, spending profile, and other relevant segments.

A typical session includes:

Players progress independently through the build. Moderators do not intervene.

A custom plugin records screen activity and captures facial data.

Emotional and attentional signals are synchronised with gameplay events.

Screens and mechanics of interest are analysed frame by frame.

Insights are delivered via structured dashboards with prioritised recommendations.

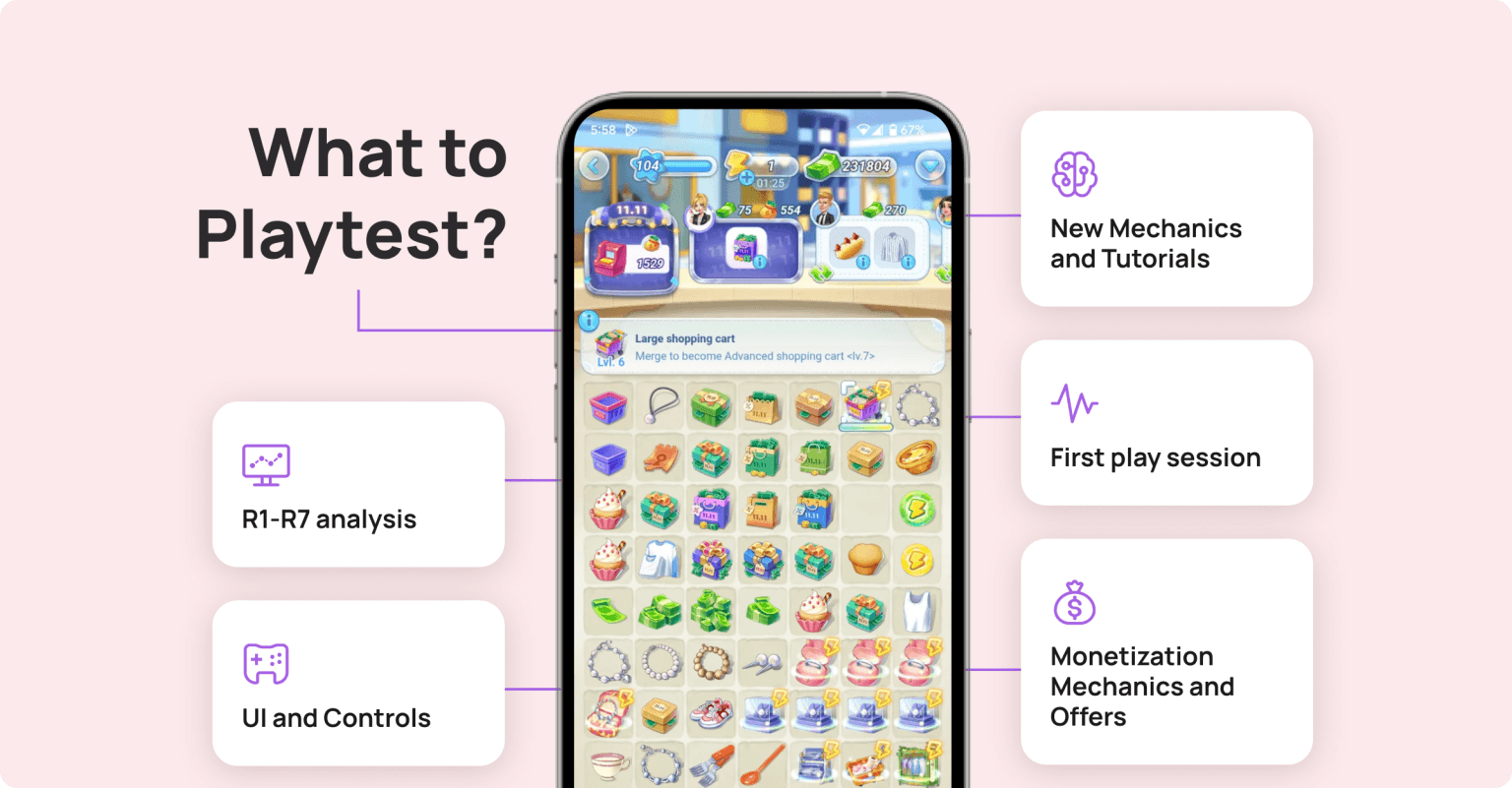

Studios can test early prototypes, FTUE flows, monetisation layers, live updates, or competitive benchmarks. The same methodology applies in pre-production and post-launch environments.

Connecting qualitative signal to real player impact

Emotional insight becomes significantly more powerful when linked to behavioural segmentation. When we connect playtest findings to in-game event data, teams can answer the harder question:

Are the players who struggled in testing representative of 5% of my audience, or 70% of my revenue-driving cohort?

That linkage transforms small-sample qualitative insight into population-level relevance.

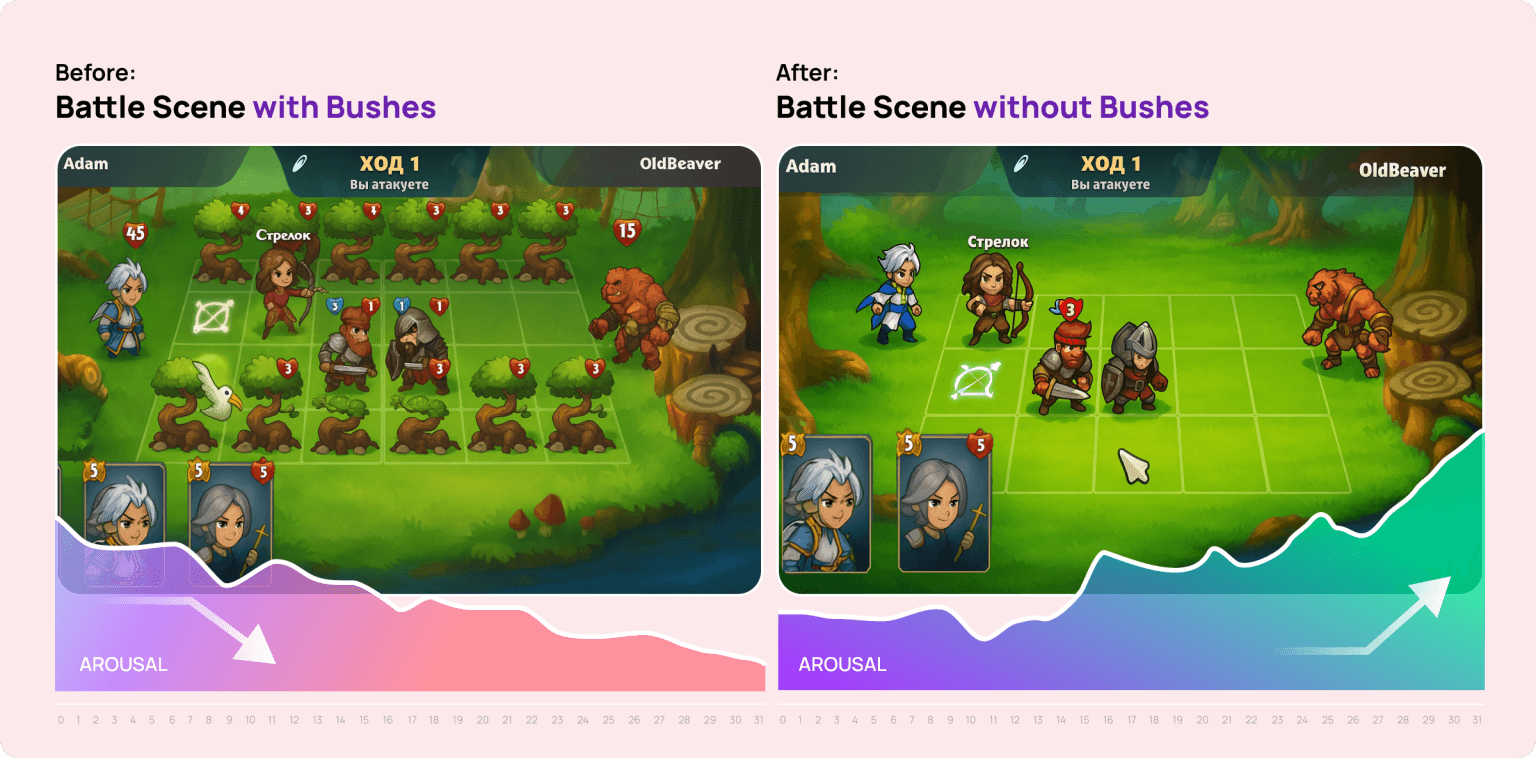

Example: reducing cognitive overload

In a recent turn-based strategy title, we observed elevated arousal combined with declining focus during early combat sequences. Emotional mapping pointed to cognitive overload rather than healthy challenge.

Visual density was the issue. Decorative elements and peripheral noise diluted clarity.

The recommendation was precise: remove non-functional visual elements to reduce cognitive load. After simplification, the studio observed a 5% lift in retention.

The improvement did not come from adding new features. It came from aligning emotional signal with design intent.

Traditional playtesting captures what players say. Emotion-driven AI captures what players experience. When combined with behavioural data, it provides a stable foundation for retention, monetisation, and iteration decisions. Interested in testing your game? Get in touch.